Fake Review Attacks Increase on Google Business Profiles

In Episode 102 of the Near Memo, we discussed how a recently implemented change in the Google Business Profile review filter is leading to more fake review attacks. Below is the video, a supporting graph and a transcript.

Transcript

Mike: On February 1st, Google wrote an article in the Local Guide Connect forum where they noted an update on how they moderate Google Maps reviews:

In the last few weeks, our protections took down more than expected policy abiding reviews from a set of Local Guides. We’ve also closely followed the conversations on Connect around unpublished reviews and we acknowledge that this change has affected a lot of your accounts.

As part of our efforts to resolve this situation, we have launched an update to our protections to fix them. We will also automatically reinstate the policy abiding reviews over the next few weeks if we determine that we made a mistake. If your policy abiding reviews were not reinstated and you'd like us to take a look, please submit a request through this form. Please keep in mind that some reviews may remain private if the content violates our policies.

This is as close to an apology as I've ever seen Google come as part of their efforts to resolve the situation. I read it and I thought, oh, this is public relations stuff. But I was curious on a number of levels. First, it was an apology to Local Guides, but not to [the many local] businesses [who lost reviews].

Second, this has been happening not for weeks but for months. It started last March with a rollout that I've covered in a discussion of the new review algorithm.

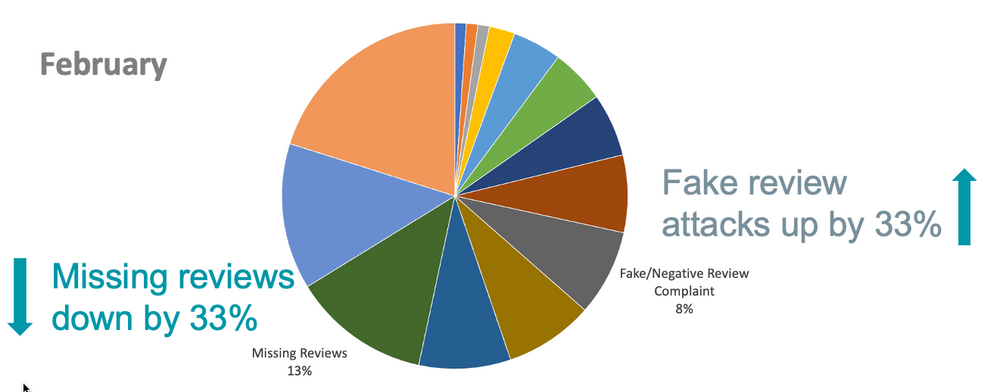

I hypothesized that if they were letting more Local Guide reviews through, I would be able in the GBP forum to see that there would be fewer reports of missing reviews and more reports of fake review attacks.

Mike: So I went into the forum, did my monthly tabulation of all of the cases for a subset of days and, lo and behold, missing reviews were down. Reports of missing reviews, in other words, Local Guide reviews, getting nuked, were down by 33% and reports of fake review attacks were up by (drum roll)...

David: 33%

Mike: 33%. So I thought: well, I don't know if it's just bad statistics or luck, but clearly we're seeing the impact in the business forums to which Google didn't apologize at all or make available…

David: And may not be reading as assiduously as they do the Local Guides forum.

Mike: Yeah or give as much of a shit about. That's right.

Greg: So let me ask something, let me clarify something, Mike. The review attacks are people are complaining about competitors, writing negative reviews about them?

Mike:. They are actually hiring companies to use Local Guides to mount a fake review attack. I am consulting with an air conditioning firm in Miami that's just been under constant attack, 5, 6, 10 reviews a day, and we've pretty much narrowed down who the likely culprits are, like who's buying reviews...

Greg: These are all negative reviews?

Mike: Yeah. Interestingly, the technique is to initially leave a positive review and then go back and change it.

David: Wow, very interesting

Mike: Yes, interesting. These are all negative reviews – very.

Greg: And these are all coming out of Local Guide accounts?

Mike: Mostly, not all, but mostly.

Greg: Not all fake reviews are from Local Guides, but Local Guides dominate Google reviews.

Mike: I mean, if you have two legs and two arms, you don't even need two legs and two arms. You need enough fingers to type a couple reviews, you become a Local Guide. This is not a particularly esteemed position in life. So anybody, you know. As soon as you leave your second review, Google is encouraging to become a Local Guide.

David: How dare you impugn the character of tens of ...

Mike: I'm not impunging their characters. I'm just saying it's a fairly low bar to become member of that group, of which you and I and Greg are all members.

One of the things that this did for me was fully clarify this new [review] filter, right? Over the past, 15 years that we've been in local, they've had a singular review filter that looked at the content and the very limited context of the review. Did they use swear words? Did they have URLs in it? Was it left on the IP of the business account? Those kinds of things. It was a very limited view of fake reviews.

Now we know that the [new] algorithm looks at the photo. If the photo has words in it that are against these things, or if the photo is inappropriate sexually, it'll get the review removed.

We know that from the report I did a couple months ago [the new filter looks at] business characteristics, category, years in business & velocity of reviews. These are all considered. And now we know, for a fact, that reviewer [i.e., local guide] behaviors [are considered]. Are they in a pod of other reviewers [that review the same businesses], are they being associated with review attacks?

Who knows what other characteristics are considered. For example are they reviewing near their home [or did they visit the business]?

We don't know fully, but now we can fully flesh out that there are four major aspects to the review algorithm that come into play [content, context, business and reviewer]. It manifests itself, you know, slightly differently depending on which thing is triggering it.

It's interesting to me that it's a much more complex algorithm that can shut down whole categories, like Pregnancy Care, as we saw, or Ukrainian and Russian businesses, or it can shut off [reviews] for a [single] business as well. If the attack is too great, the business just gets shut off. They've automated all of this and they're nuking a fair number of reviews.

So as David pointed out in the green room, they're becoming sort of the new Yelp of the review world.

David: Just that they haven't necessarily learned from Yelp's mistakes in terms of prioritizing their reviewers as opposed to prioritizing small businesses.

Mike: Correct.