Confusing Tools, Reporting Fake Reviews, 'Highly Rated' LSAs

Marketing Tools Are Just Tools

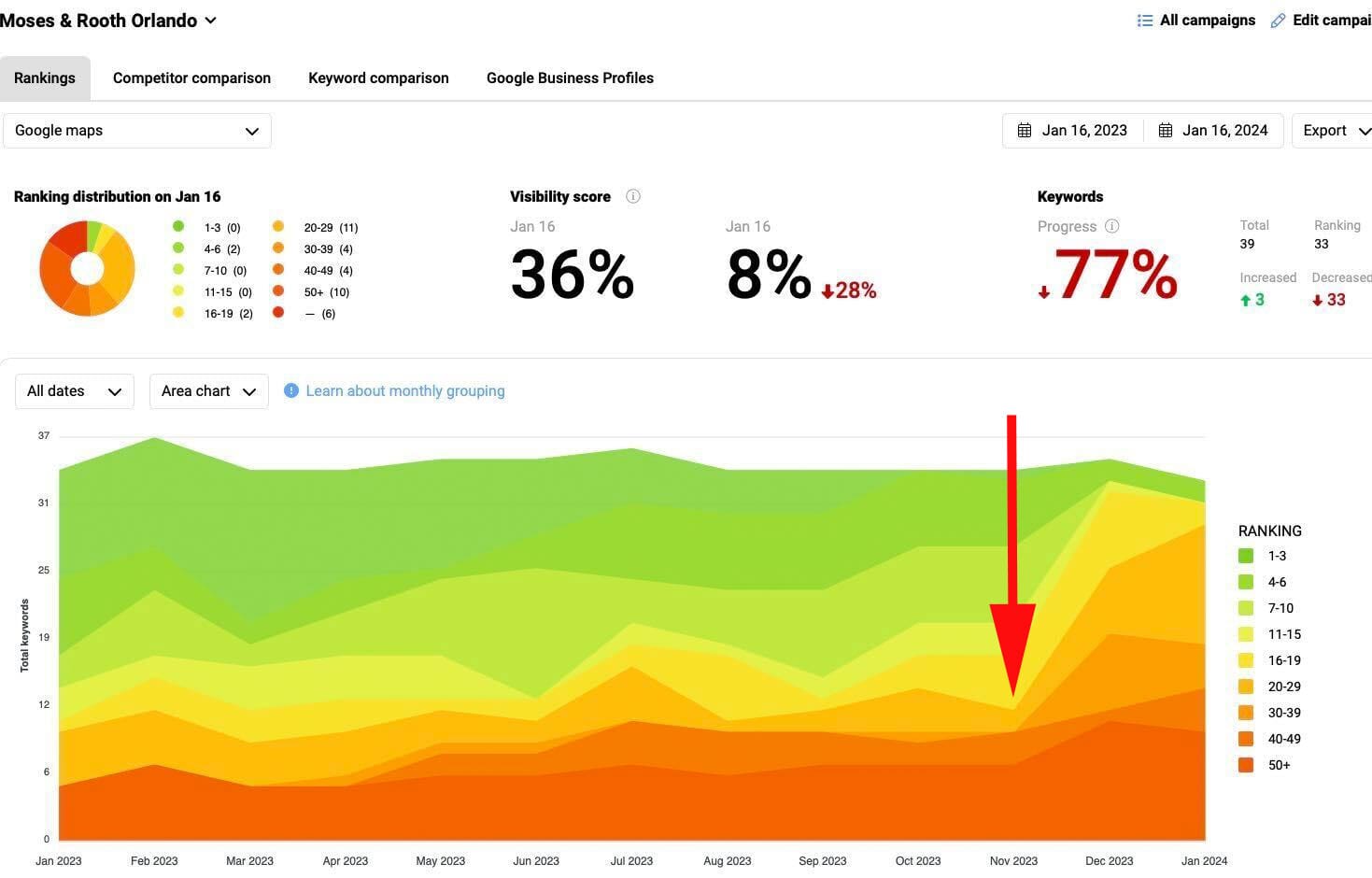

When you see a ranking chart that looks like the one below, your first thought is that the SEO has gone south. Whether due to penalties, competition, algo changes or all three, when you see a consistent quarter's worth of steep visibility declines you start wondering just how far south it has gone. I have a consulting relationship with criminal defense attorneys Moses & Rooth. I help them understand their metrics and trends, and I advise them and their marketing firm on directions and possibilities. Ranking charts are helpful to spot big trends and the one below was pointing to something big. The bulk of the problem was with their Google Local/Maps visibility. After spending a lot of time looking at all the big (and expensive to fix) things that might have gone wrong, I started wondering whether it was something small. Darren Shaw noted on Twitter that since Google started using open hours as a ranking factor more aggressively, "everyone seems to be open 24 hours." Well, not everyone. Darren's example, like my client, was attorneys. My next step was to ask Whitespark when the reports were run. It turns out this ranking report was generated during the early AM hours (this will soon be a selectable feature). I then set up another ranking report at Whitespark and other rank tracking tools, running daily, to track rank during the day and night. All signs pointed to the business doing fine during open hours but suffering during the early morning when the ranking report was run. Once Moses and Rooth followed their many competitors to "24 hours" the problem resolved itself.

Our take:

- Data tools like rank tractors are just point in time approximations of reality. They make assumptions and design decisions that are often not obvious.

- The story that the tools tell might not be the story you think it is. Practitioners need to step back, take a breath and ferret out what the data is really saying.

- Google's hours algo update is a bad update that could have been handled in more productive ways. Predictably, it's leading to a broad degradation hours accuracy in some (but not all) categories. We discuss these issues in our most recent podcast.

Does Reporting Reviews Work? Sometimes.

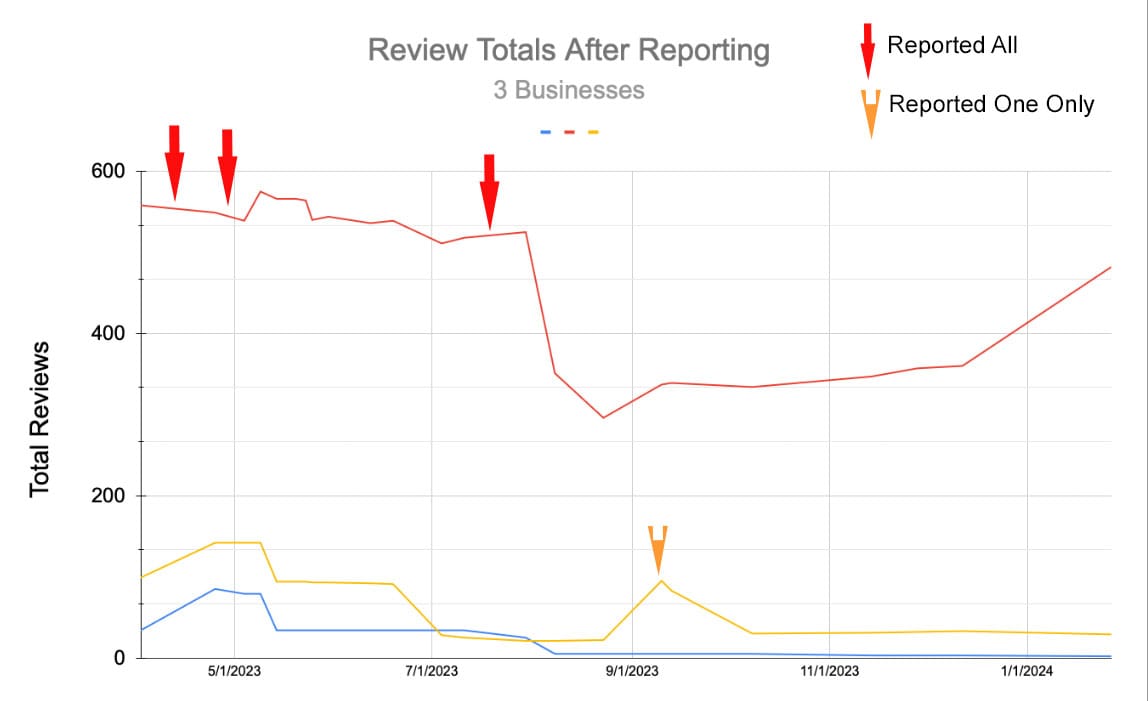

In September, Joy Hawkins noted that Google frequently does nothing permanent when a business is caught buying reviews; they can keep buying reviews. My experience is slightly different. In a review reporting experiment, I've been working on for almost a year, two out of the three businesses I reported had their fake reviews removed and seem unable to get them back. Last April, while helping an HVAC company deal with a fake review attack, I uncovered a review network being used by many businesses to buy fake reviews. In late March, I reported three of the companies using that network via the Business Redressal form. A month later, I escalated the fake reviewer network in the Product Expert forum and within two weeks starting seeing the reviews decline. In July, I reported seven common review profiles via the GBP forum and escalated in a Product Expert specific form, and saw another significant drop in review counts within two weeks. When I saw a bump in reviews for one of the businesses, I repeated the process of filing a redressal form and escalating that case in the forum. The largest offender, a restaurant, seems to have once again been buying reviews and is getting close to replacing the removed reviews. But neither of the other two have added any reviews since October.

Our take:

- A persistent, multi-pronged approach to leveraging every Google form/forum seems to offer some relief and (at least partially) keep reviews from returning.

- The process for getting fake reviews removed is arcane. It's based on internal ranking algos of users and businesses and thus only successful sometimes.

- Google might have tightened up review removal during the past year but it shouldn't be this hard.

Why LSAs Are a Microcosm of Google Today

Google confirmed that it's testing a "highly rated" callout for Local Services Ads (LSAs). This test, along with LSAs in general, encapsulates everything that Google is about these days, everything they could be and everything they might become. LSA ads are highly appealing to users. In Near Media research we have found that, when LSAs are present, use of the Local Pack drops by almost half. They also impact organic traffic. Even ad skeptics find the elements of LSAs appealing (see this short user video). Google knows this; they probably know user behavior better than anyone. They understand that additional "highlights" in the ad will increase LSA engagement and use. LSAs were meant to solve the many problems that free Google Local profiles created, including fake listings and reviews. To the extent that LSA guidelines were enforced they did. But as Google has scaled the product and replaced human curation with machine moderation, more cracks are turning into crevices. With the decline of human curation, abuses and illegal scams have escalated. Fake listings and hijacked reviews are rampant and with them we are seeing the same bait and switch lead-gen we saw in Google Local. All the while Google has been increasing the pricing of these ads and NOT clearly marking them as ads. Coulda, woulda, shoulda is what every parent tells their child in an effort to help them find a moral compass. That is, unless the child is so far down the path of blind self-interest that they no longer waste their breath.

Our take:

- Of late, Google has been focusing on revenue at all turns and not user benefit.

- It would appear that we are in or near an inflection point, driven by AI, of massive change on the internet, including in search.

- LSAs can been seen a metaphor for how Google has been responding to these changes. It isn't a pretty picture.

Recent Analysis

- Near Memo episode 143: Will fired Google Raters be replaced w/AI?; SEO tools often hide the truth; do content strategies need to be updated after 'Hidden Gems' and the decline of Big Social?

Short Takes

- GBPs providing more transparency around business suspensions.

- Should realtors register their GBPs at the brokerage or home address?

- Moz: upgrade your local business about us page.

- G-Maps spam fighting: "suggest an edit" loophole, second reports.

- Menu Highlights lets restaurants easily update GBP menus.

- Exact match domains gaining influence in ranking once again?

- Some publishers/marketers abusing recipe structured data.

- Google launched discount codes in rich results in the US.

- SGE is Google's new commerce-search weapon against Amazon.

- An LLM/AI-powered Siri is coming perhaps as early as June.

- The DMA, Apple and (potentially) unintended consequences.

- TikTok wants to make all videos "shoppable."

- Europe becoming the de-facto regulator of the US tech industry.

- Regulatory pressure scuttles Amazon's purchase of Roomba maker.

- Walmart expanding 30-minute drone delivery pilot.

- Zoom readies launch of app for Apple Vision Pro.

Listen to our latest podcast.

How can we make this better? Email us with suggestions and recommendations.