Legal Hallucinations, Review Changes, 16% Mobile CTRs, Legal Ads in AI Mode

This is Near Media's new monthly legal marketing newsletter.

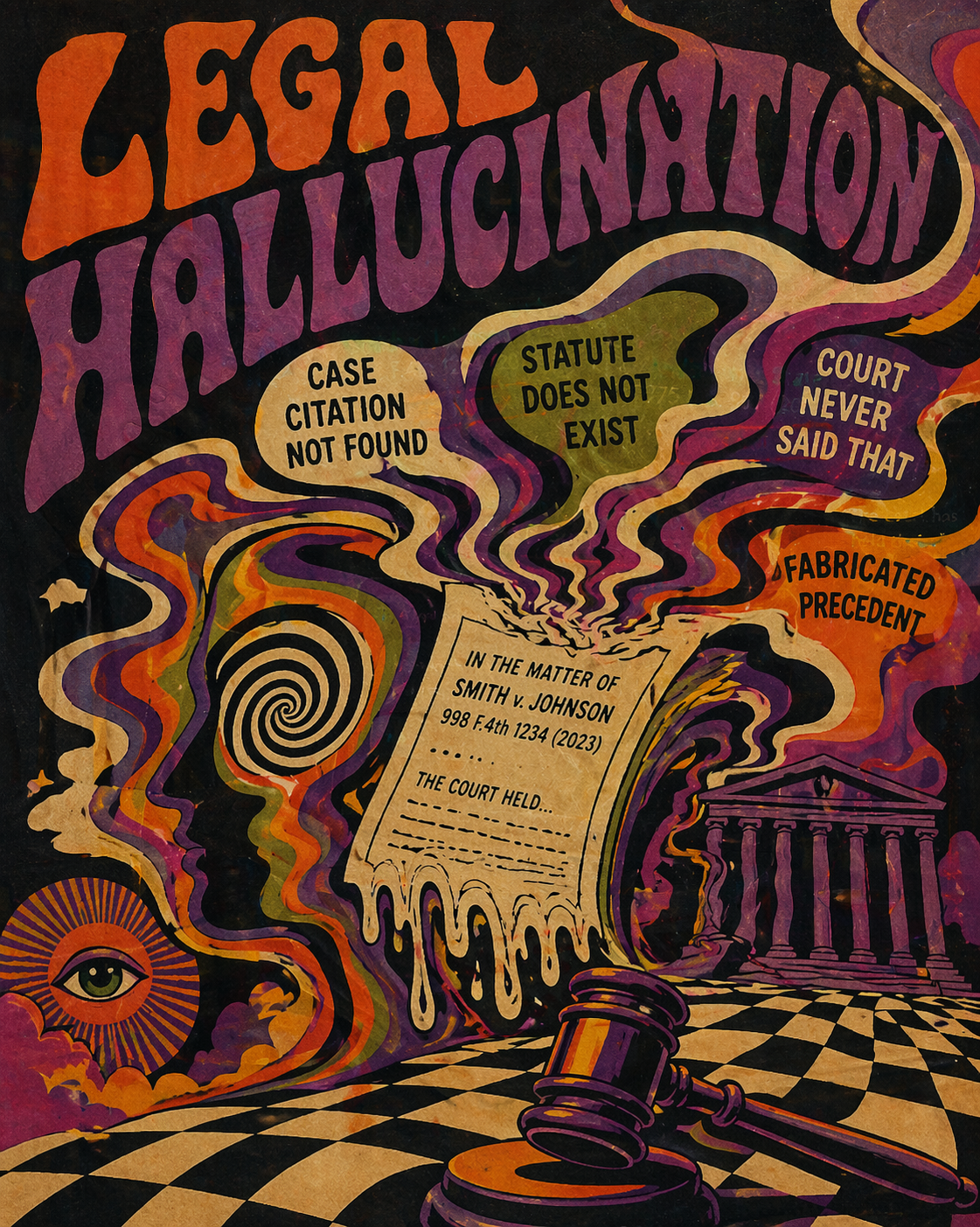

Legal Hallucination & Humiliation

Lawyers in an Oregon case involving vineyard ownership were recently sanctioned more than $100,000 by a federal judge for AI errors and fabrications in their court filings. This case joins a growing list from around the US where lawyers have been shamed and penalized for AI errors in legal documents, extending even to the most expensive "white shoe" firms. While it's not clear which system generated the hallucinated material, legal research vendors LexisNexis and Thomson Reuters both make strong promises about the accuracy of their products. Specifically, LexisNexis makes the claim its tools are effectively hallucination free. Researchers at Stanford and Yale decided to test those claims. They compared Lexis+ AI, Ask Practical Law AI (Thomson Reuters) and GPT-4 on 200 legal queries. What they found was that none of these tools in fact had eliminated hallucinations, which still showed up in a substantial number of cases – even when RAG was involved. Lexis+ AI answered more questions and delivered accurate responses "about 65% of the time." Remarkably, Thomson Reuters’ tool punted on about 60% of queries – simply not providing an answer – and generated accurate responses <20% of the time. Researchers found the failures were both significant and subtle: use of irrelevant sources, bad case authority, incorrect citations and others. The less obvious errors (vs. outright fabrication) were harder to detect and potentially more dangerous accordingly. The temptation to turn over research and writing to AI is great, but as this Oregon case shows, you do so at your own peril.