EP 255 - How Google’s Personal Intelligence is Quietly Revolutionizing (Everyone's) Search Results

In this episode we are joined by Garrett Sussman (iPullRank) to discuss his provocative 12-month study on AI personalization. We dive deep into how Google’s "AI Mode" uses your Gmail, Photos, and Calendar to tailor results—and why "unopened emails" might be influencing what you see next.

The search landscape is shifting from keyword matching to "Personalized Intelligence," where Google leverages explicit consent to access a user's Gmail, Calendar, and Photos. Research conducted by Garrett Sussman and Profound reveals that Google’s AI doesn't just find information; it infers a user's values and disposable income to steer them toward specific brand outcomes.

The Podcast Deets

The Opt-In Ecosystem (03:11 - 10:59): A look at the "Personalized Intelligence" toggle that gives Google permission to scan your private documents.

The Persona Impact (12:22 - 21:07): How LLMs change their tone and recommendations based on whether a user makes $45k or $150k.

The Email Seeding Experiment (21:35 - 30:02): A breakdown of how sending specific emails can move a brand from the "bottom 10" to a top AI recommendation.

Key Takeaways

• Google’s Personal Intelligence is an opt-in feature that integrates Gmail, Photos, and YouTube history into AI search.

• AI search platforms (like Gemini and ChatGPT) change product recommendations based on explicit and implicit persona data, such as salary.

• Gmail content is a significantly stronger signal for AI personalization than Google Photos.

• Even unopened emails can influence the recommendations provided in Google's AI Mode.

• Google’s AI is moving toward an "agentic" model, acting as a partner that "solves" problems before a user even finishes their query.

- 👇 Watch by topic:

00:00 – Introductions and the Personalization Shift

03:11 – Defining Google’s "Personalized Intelligence"

12:22 – The 12-Month Profound Study: How Persona Impacts Results

21:35 – Seeding Experiments: How Gmail Influences AI Recommendations

30:02 – The Privacy Paradox: Family Names and "Creepy" Data

Related Links

Building Personal Intelligence: A step towards truly personal AI

Interested in sponsoring this podcast or our newsletters please reach out to mblumenthal@nearmedia.co

Near Memo: Personalized Intelligence & AI Mode Transcript

Greg Sterling: Hey everybody, welcome back to the Near Memo with the lovely and talented Mike Blumenthal and me as always, Greg Sterling. And today we have another special guest with us, Garrett Sussman, who's the director of marketing at iPullRank. And many of you know him from his prolific LinkedIn activity. And he just spoke at SEO Week in New York. And this is why he's on the show today because he had a very, very provocative, interesting and relevant presentation about personalization, Personalized Intelligence, and AI Mode, and we want to get into that and we may, we may decide to break this into two, depending on how it's going, because there's so much to talk about and we're going to talk about what is it? We're going to talk about a really provocative year-long study that they ran with Profound that kind of got into the mechanics of personalization. How does this impact local? How does this impact SEO and what does the sort of near-term future look like with personalization, AI Mode and other things? So welcome, Garrett. Glad to have you on.

Garrett Sussman: Thanks for having me guys. Obviously I came from local, I got into SEO originally from working with Mike and GatherUp. And so it always holds a place in my heart. And we are in a weird space right now. So excited to dive in and get some very hot, spicy opinions.

Greg Sterling: Yeah, so for the folks that weren't at SEO Week and haven't seen your presentation, which we'll link to in the show notes, and I recommend people watch it, talk about what, sort of at the highest level, what did you talk about? What was the presentation?

Garrett Sussman: Yeah. So the focus, I think in SEO, local SEO and AI search right now is hyper-personalization. It's something we're all familiar with with local. But in essence, I talked about how we need to, as marketers and SEOs, take into consideration how through AI search, we are getting much more personalized results. It's very difficult to track. It changes our content strategy, it changes the way we approach business and AI search in general, and so I ran a bunch of experiments to show how that's happening and looking at what's coming and what's already here?

Greg Sterling: So personalization is one of the hallmarks of AI generally: ChatGPT, Claude, Co-pilot, presumably. It remembers, it has a long kind of context window in memory. It remembers what you asked before. You can make references to things that you previously talked about. And so there's that kind of personalization and it learns about your preferences. It learns about your biases and then it answers things. I mean, I see this with ChatGPT all the time, but it doesn't know information other than what I've given it. It doesn't know anything about my history or my behavior or my interest other than what I've explicitly told it. Now, this specifically is about Google and Personalized Intelligence. Let's talk a little bit about what is that. Google announced that a few months ago, I think, or maybe last year. So talk a little bit about that.

Garrett Sussman: Yeah. So this was in January and this is—we already know Google collects a lot of data about you—but you are basically now giving Google explicit permission to access different Google data sources in your ecosystem. We're talking Gmail, Calendar, Photos, YouTube history. We already know it looks at your Google search and allowing Google to basically, when relevant, take that information, that hyper-personal private content and use it to give you a better personalized result in AI Mode and in Gemini and potentially ultimately in AI search results.

Greg Sterling: Yeah, the blog post was January.

Mike Blumenthal: So Personal Intelligence is on by default, but connected apps to the Google ecosystem is off by default. Is that correct?

Garrett Sussman: So it is something that you need to opt into. And once you opt into it, then when you do a search in AI Mode, it will actually reference a specific email from your Gmail or a specific photo.

Mike Blumenthal: But the memory is defaulted on, the connected apps are defaulted off. It looks like to me when I'm looking at the Personal Intelligence settings screen.

Garrett Sussman: It's defaulted on when you actually set it up. You have the ability to manage it, but the memory is defaulted on when you opt in.

Greg Sterling: Just to clarify, it's an opt-in, not an opt-out. They're not turning it on for everyone, and then people have to figure out how to opt out. It's something that you have to explicitly agree to do. In other words, share the contents of these apps, Photos and Gmail. What else is in there beyond that?

Garrett Sussman: Calendar, YouTube, search history.

Greg Sterling: And obviously, let's assume that Google were an ethical company and we could trust them. This would be a great benefit, right? It would be a convenience and the machine would prompt you with information that would be helpful and it would tailor responses to your interests and needs. And so it's a great idea in the abstract.

Garrett Sussman: Absolutely significant improvement of the product. I mean you just think about how much better your results are when you are logged into Google versus Incognito. Just multiply that times like a thousand.

Greg Sterling: So I mean, when I saw this, absolutely did not. My response was no f-ing way am I going to do this? Part of me feels like I have to do this just to understand how it's working, as your presentation described. But as a consumer, as a person, it's like Google is already too intrusive and too manipulative. And I don't want to give it any more of my information because not only do I not trust Google, but then Google just becomes a conduit for all the bad actors out there where they can go in and say, hey, Google, give us all this data. I mean, it's already happening in the current government. Give us all this data about critics and dissidents and all of these. This is a China scenario. But let's not get too political here. I want to talk about the mechanics of this.

Mike Blumenthal: But as Garrett pointed out in his talk, even if you don't turn on the give-apps-permission, it one has memory defaults on. It remembers all your queries and then two, it has your search history. So even without giving it access to your Gmail and your YouTube, whatever, it brings that into the AI memory. It knows a lot about you, whatever you choose to do or not do.

Greg Sterling: Okay, Garrett, would you add anything to that about sort of implicit personalization where somebody hasn't explicitly said, okay, personalize my results with these different apps and the content? But as Mike said, the search history and other inputs that Google is getting, that's almost as good really as a practical manner, I would imagine.

Garrett Sussman: Yeah, I mean, philosophically, I think if you want to participate in digital society, Google has all your information. We can all agree that whether we consent or not, to your point about Google as a corporate entity, it has the data. How they decide to use it, the Personal Intelligence is a way for you to explicitly give them permission to do all sorts of creepy stuff with your data that in theory will improve the product and the experience. I feel like culturally and socially, we are not there yet, but I feel like that's the inevitable direction that we're going in. And so, you know, I'm not an important person, so I don't give a crap if they have access to my stuff.

Greg Sterling: But you are! You're on this podcast. So by virtue of that, we've recognized you as an important person. Well, I mean, there's a whole long conversation about privacy that we can have at another time, which is privacy and trust and all of these issues—this cluster of issues are very important. But there's so much material to get to. I want to focus on that.

Mike Blumenthal: Well, the one reason it's material here is because if apps are not opted into, for example, as a normal part of consumers' behavior, then some of what Garrett did is limited to the people that do that. You can't extrapolate it to everybody. And so one of the questions that I would have is how many people actually participate given it's opt-in. Now it's probably very few. But like everything with Google, sooner or later, it becomes default.

Greg Sterling: Nobody knows about this. People out in the world have no idea about any of this right now.

Garrett Sussman: There you go. No, we're still at like less than 1% of the population. I think Mike's point is right on. Liz Reid gave a presentation or was on a podcast where she said, we care about consent. I think AI Mode, we've seen growth. I got some SimilarWeb data where we're seeing like 25% of Google users are actually starting to use it because they are starting to force feed us AI Mode. The only reason all this is a big deal is AI Overviews were the default version of SERPs. If AI Overviews wasn't opt-in, then we wouldn't be talking about AI search and LLMs in the same way that we have in our search world. You know, going into whether AI Mode and all of this will become a part of our daily process, I think it's inevitable. But to your point right now, same way ChatGPT is just what, 2% of the actual search market? Like even ChatGPT doesn't really move the needle, nor does Bing, but eventually, if Apple integrates Personal Intelligence with Siri, then all of a sudden that market expands significantly.

Greg Sterling: Well, what our survey data show is that people—I mean, I've said this a million times—people really like the conversational experience. They prefer the conversational experience to traditional search. Traditional search is in certain cases more efficient, but all things being equal, people, most people are going to say, either I want to use a combination of the two, or I prefer AI Mode explicitly or some equivalent conversational kind of interface. We have both survey data and behavioral data. We were looking at it in the context of choosing a PI lawyer. Let's just say directionally, people will embrace these tools because they like the experience better. And the question is, can they trust the underlying data? But that's a little bit of a tangent. I think we can make the assumption that AI Mode, because Google is, as you say, force feeding it, at every turn it's presenting it as a 'try this, look at AI Mode.' And so I think we can assume that the numbers will continue to increase. But let's talk about your study.

Garrett Sussman: To your point, there's two mental models, right? The way that we use search and we've been trained to use search is different than a conversational search bot. Like we've been trained to use keyword-ese to be efficient, use a couple of words to get the information we need because we all know how Google operates. But Google has been implementing natural language processing for decades now, like with MUM and BERT. And so once you get into this chat bot model, I think the way your brain processes it and uses it is different. So that context is a big thing. We did an AI Mode study last year as well with user data and behavioral interviews. Our local search was on healthcare, finding a healthcare provider. And seeing people actually have the conversation around insurance and making that be a qualifier using natural language is a completely different experience than the way people use search.

Greg Sterling: Right. So you get a lot more about their intent and you get a lot more about what their priorities are and what's really important and what are the deal breakers and so on and so forth. OK. So let's talk. In the SEO Week presentation, you talked about a 12-month study that you did with Profound that was really fascinating that proved essentially the thesis about personalization. That all of the data that was kind of coming into the mix was changing search results depending upon the persona. Talk about that. What did you do and what did you find?

Garrett Sussman: Yeah, there are two experiments. So the way I broke it down in this framework is when we're thinking about the personalization interaction with these LLMs, there's explicit context and implicit context. There's the implicit context that it's taking that you are not saying in your prompt—that it knows about you, location, and what we're talking about with Personal Intelligence. But then there's the explicit context where you're actually telling it about you. Like I just mentioned about finding a health provider that I need an insurance that is under this provider when I'm looking for a doctor. So my study with Profound is I gave it explicit context where I set up four different personas: I am this gender, I make this much money, this is my job, this is what I'm into. And then I said, 'what is the best coffee machine for me?' 'what is the best running shoes?' across four different products. And we ran that across eight different AI search platforms for 12 months, over a hundred thousand prompts to see how the results were influenced. And what we found is when you give that explicit context—when it knows I make this much money—it makes different coffee machine recommendations, statistically significant across that data. Now, this is a kind of lab environment. It's not in the wild. So, you know, the way that we extrapolate any of these types of findings, I think we need to take everything with a grain of salt. And we've seen it change over time. Over that period, I saw ChatGPT move from four to five. I saw Gemini go from two to three. But for instance, if we told them that this person makes $150,000 per year, they were recommended much more expensive coffee machines versus someone who only makes 45,000 a year and they got like a Keurig recommendation. And so we saw this across all different brands that this explicit context very much influenced the results. And one other really interesting aspect of the study was the language that they used in the LLM's output was different. So for instance, when it comes to salary, we found that it used premium language. It made assumptions that the person had disposable income.

Greg Sterling: It's making assumptions about values.

Garrett Sussman: Exactly. Whereas if they didn't have as much money, you're like, 'you might need to consider whether you even get a coffee machine. Here's your budget.' So there are brand implications. There's audience research implications. That was the explicit experience.

Greg Sterling: Yeah, that's super fascinating. And one of the things you mentioned, you referenced Kevin Indig's research, and there's been a bunch of research—Profound has done this research. And what it sort of collectively shows—and this goes to the debate about how influential LLMs are—is that people are using LLMs, especially in a product context, to get a short list of recommended products. So 'I'm looking for a coffee maker. I want to see the three best in this price range, based on reviews and longevity and whatever the considerations are.' And then it will show you those recommendations. And the studies show that very often people will take that information and that becomes the competitive set that they choose from. And then they may go off and do subsequent research or get reviews. But this is setting up their sort of universe of choices. Now they can break out of it if none of them are satisfying. But it's not like going to Google and seeing 10 options or 20 options or that product grid where you've got 16 different things going on. It's like, 'here are the three or here are the four,' and that conditions people's subsequent choices. If that's true, if that holds in the real world—and my anecdotal experience is pretty consistent with that—then there are pretty profound implications for these LLMs recommending, 'here are the running shoes, here are the places to stay,' et cetera. You're really steering people toward—it's not just 'here's a result, click it or don't click it'—it's like 'we're steering you toward these outcomes' and it's pretty significant.

Garrett Sussman: I mean, there's a choice paradox. We live—like in the '70s when you went to the grocery store, there's what, like a hundred products in the grocery store? Now you walk into a grocery store and there's like thousands and thousands. I think we are overwhelmed with choice. And I think over time, people want to be given just a few options that are actually relevant and personalized for them. All these studies are tricky because search behavior is changing very rapidly. There was another study talking about kind of like a magical realism when it comes to AI. People who are not familiar with AI, they think it's magic. They are skeptical, but they're also willing to trust it. I think as that trust is built over time and they have positive experiences with their AI interactions, that's going to be the way that people search. So to your point, there are implications for businesses with this technology.

Greg Sterling: I mean, you referenced the trust or lack of trust in AI and there's a lot of data on that. I think it's a very nuanced conversation because it depends on context, depends on what you're doing, what you're looking up. And what people say—what people used to say in the beginning—is 'yeah, I totally trust it.' Two thirds would say 'yeah, I trust it.' Now what people say is, 'I trust it, it depends,' or 'I trust it somewhat.' And so the users have gotten more sophisticated. They're still using it independent of the question of trust. It's not like 'I trust it, I use it.' It's like 'I'm using it, but I may in this particular situation not trust it or I validate with something else.' I do the research and then I go to Google to verify that this is accurate information. So there's a lot of nuance. But anyway, talk about gender and job title in the presentation. Gemini was inferring salary based on these. Talk about that because that's interesting as well.

Garrett Sussman: Yeah, there's model-to-model differences. And the other thing, as you called out—if I can encourage one thing to any SEOs listening to this, it's run experiments, do your own research, because there's no blanket statements anymore. Everything to your point about the nuance is super specific. We wouldn't have the same approach to a city that we would to a rural location and the way that people search there and who searches there. But for gender, what was interesting was we did some citation research on shoes. And what we found was when the citations were examined for the gender, we actually found references to women-driven running shoe websites. They did not appear in the citations for the male personas. So when you're starting to think from a digital PR standpoint and where you want to appear, you need to make sure that you are showing up for your ideal persona because there's going to be selection bias based on that context as well that makes it more complex and messy.

Greg Sterling: Right, and there's a kind of underlying discussion about whether the LLMs are biased. What are the assumptions LLMs are making about people's choices and tastes and who should be shopping with what?

Mike Blumenthal: 45-year-old white programmers in California.

Garrett Sussman: Great book reference: if you've never read it, in 2020 Brian Christian wrote 'The Alignment Problem.' And he talks about this specifically. We in the SEO space have all got obsessed with the idea of vector embeddings, right? But the training data, to Mike's point, is Western hemisphere based on a ton of English writing historically. I think it was like in the nineties we started to move from using male pronouns for everything and all that is baked into the Common Crawl data sets. Yes, there are situations that depend on RAG where it actually retrieves up-to-date fresh information from the internet, but the whole sort of training data is the history of the internet, which is problematic.

Greg Sterling: Yeah. So let's talk about the seeding piece of this. One piece of this that was really interesting is you were testing how different pieces of content, emails and images, would affect the recommendations because Google is starting to draw upon Gmail and Photos and YouTube videos. So tell us about that aspect of the study.

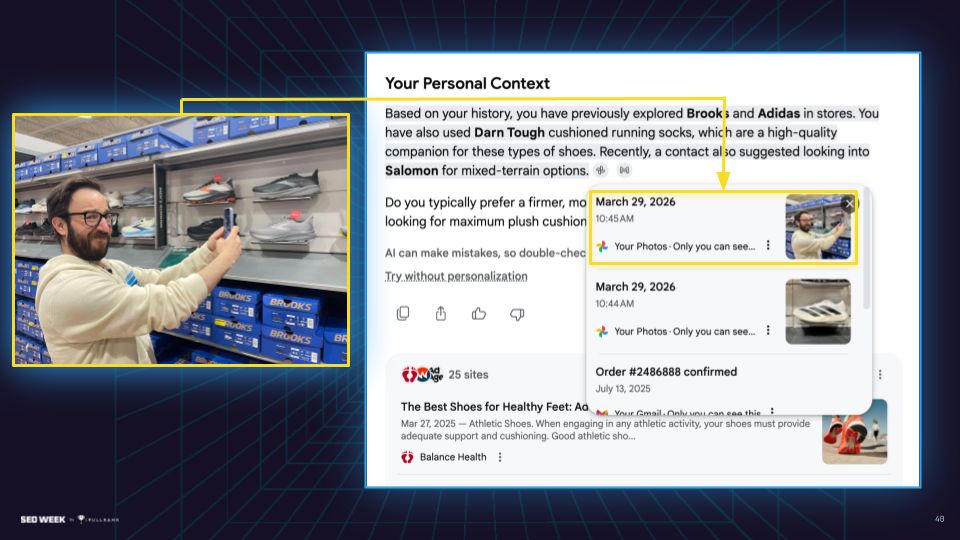

Garrett Sussman: So this was a Personal Intelligence experiment that we ran over three weeks. Me and two of my colleagues at iPullRank, Michael Tando and Kate Dombrowski, basically set up three different accounts. We had a completely clean Gmail/Google account that wasn't used, tabula rasa, completely not connected to anything. Then we had a second completely clean Gmail account that we connected to Personal Intelligence—we opted in. And then the third one was my ridiculously noisy personal Gmail account with decades of emails and photos and everything connected. And I connected that to Personal Intelligence. Don't judge me! And so what we then did is we set up an experiment where over two weeks, every single day, we ran about 50 prompts. They were eight different products across everything from banks to shoes and productivity tools. And then we had a set of six prompts that are 'what are the best versions of this?', 'what are the best versions for this specific attribute?', 'what are three recommendations based on what you know about me?'. And so they all had the same structure. And we ran it for three weeks. And then halfway through what I did is I sent an email from a different account to each of these accounts for each of the different products saying, 'hey, I recommend this brand. I like it because of X, Y and Z.' And then I went to a local store and I took photos of all the products and I uploaded all those to Google Photos to see how it would influence the results. And it was statistically significant for this specific data set. For our data set, we saw a significant lift in recommendations across the board for all of these products, mostly from email. Photos did not have the same impact, but we saw the products that I seeded in the emails were not showing up in the original subset. They were like the bottom 10, they really wouldn't show up. And they consistently had about 60% to 80% visibility in the recommended results for the accounts connected to Personal Intelligence and actually referenced those emails consistently.

Greg Sterling: So after the emails were sent, those results started showing up in the accounts opted into Personal Intelligence.

Garrett Sussman: Precisely. A lot of the times you would get the context in AI Mode saying like, 'based on this email that you received from Garrett Sussman, we think you would like this Salomon running shoe brand because it's great for durability.' It would actually parrot the copy from the email. So it was very much influencing the recommendations pretty clearly from the experiment.

Greg Sterling: So why do you think that email was weighted so much more heavily as a signal than images?

Garrett Sussman: That's a good question. Mike's done a lot of research on visual recognitions and Google's tools. And while it's really excellent, it isn't as specific. There's a lot of noise in photos, I think.

Mike Blumenthal: And there's more processing costs. I mean, the reality is that getting to an understanding of a photo takes a whole extra step. We've already got the text in the email. That's easy to bring into a recommendation. It's explicit. And there's nothing explicit in a photo. Plus you got to process it to even get what's implicit in the photo. And I would assume the costs are a big driver here.

Greg Sterling: Yeah, that's probably a good insight. If you take a picture inside a store, Google may have no idea what the intent behind that photo is. But you even used some fake brands, and you said those were recommended.

Garrett Sussman: Yes, so as an additional part of the study, once we saw statistical significance, we on the last couple of days added in the same email format and the same photo format for completely fake made-up brands. And those were recommended consistently as well, which I guess is not really surprising. Highly recommend the PDF that Google wrote on Personal Intelligence, acknowledging a lot of these potential limitations of AI Mode. In my talk I kind of joked about email scam marketing is back. I would hope that Google would get better at identifying the issues there, but it's still an LLM. It still lacks a lot of control, even though it's in the product.

Greg Sterling: And you said—I mean, this was fascinating—even unopened email was influential.

Garrett Sussman: Yeah, there were two really surprising cases. One is a newsletter that I received over a year ago for productivity software tools that was cited in AI Mode. It referenced three different products. And I went back to find the email because it showed me the subject line. It was an unopened email. So then I opened it. I found it right in the middle of the body where this guy, Marco Giordano, talked about this product. And so that's nuts. There's a lot of noise out there, Greg.

Greg Sterling: You don't really like it if you're not opening the email!

Mike Blumenthal: Well, the reality is if Google has aspirations to be your agentic partner, they need the information from all the emails you didn't have time to read because they're going to solve this. Whatever information problem is in that email, they're going to solve it for you.

Greg Sterling: Well, Meredith Whitaker, who's the CEO of Signal, has talked about agentic work. For agentic work to work, people have to give it access to all kinds of things: calendar, bank accounts, credit card information, so on and so forth. And it needs kind of holistic access and information to be able to sort of carry things out. And this is sort of a baby steps version of that, right? I mean, it's sort of the foot in the door to get us accustomed to giving Google... I mean, Google already has my credit card information on file with Google Pay, and I just click a button and there it is. Talk a little bit about transactional emails.

Garrett Sussman: To that point, one interesting thing that came up in the study before we even see the emails is Google has all of our receipts and our confirmation emails. And they—my goodness, Google loved telling me that I bought stuff. It was uncomfortable because you're getting this output about hoodie recommendations. And it would say, 'well, clearly you love Reigning Champ, Aviator Nation. Here's the three times that you've bought it in the last year.'

Greg Sterling: Yes, I was gonna ask you about that.

Garrett Sussman: And as a human, I did not like that, but it was interesting that it over-indexed on these transactional emails. One next step of the study that I have not done yet is I am going to go through all of the links to the emails that we got. I want to see what percentage are transactional emails, what percentage are marketing emails, what percentage are those personal emails? Because I'd be very curious. I didn't see a ton of marketing emails, but we did get subscriber updates or ClickUp emails about product marketing updates. So they did come. It just wasn't as explicit as the personal.

Greg Sterling: We have to assume that Google is going to refine this and that the balance of the weighting of the signals will evolve over time. This is right now, they're just very much in an experimental phase. You also talk about your family's names showing up in some of these recommendations. And that was weird and somewhat uncomfortable.

Garrett Sussman: Yeah, uncomfortable. It is uncomfortable. I remember because it was last Hanukkah, I was looking for gifts for my daughter. And I might have mentioned her name once—Sammy. And so then when I was looking for streaming network recommendations, it gave a very personal output saying, 'hey, knowing that you're in a family, your daughter Sammy loves Bluey. We would recommend Disney Plus because your wife can watch HBO Max as well and blah, blah, blah.' What was interesting and what is very tricky with all of this too is we know that we have explicit citations from the Gmail and the Photos. We do not have explicit citations from our search history. And one thing I called out in the study, which was really interesting to me, is when I had a general query—'recommend a streaming service for me'—it would go into that personal recommendation. And then in the citations, you could see a CNET article that said 'the top 10 family streaming services.' And I did not give any context of that in my search. So there is a lot of implicit data that's being pulled in in the results.

Greg Sterling: And I would argue that Google is probably going to have to focus on that because once things like your family members' names start showing up and these kinds of very personal details, it's going to freak people out. If you look at all the privacy surveys, we are in a very different position today than we were a decade ago when these tech companies were seen as cool and really innovative. Today, we've got the bro-ligarchy and there's this really adversarial relationship, I think, between technology and users. Users are engaged with technology because they have to be. Everybody's using social media. Everybody's using search. But I think people are very ambivalent. And I don't think Google can take anybody's consent or participation for granted. So what's going to probably wind up happening is a lot of these implicit signals will be brought to bear on this. You know, they're going to have to find the right balance between something that seems too intrusive and something that is genuinely useful and helpful.

Garrett Sussman: I think there's a lot of nuance about audience types. It's going to be very interesting to see different demographics and how they kind of encounter this and how they interact with it. For the past couple of years, we've seen people preferring to go to TikTok or more people going to Reddit for their searches. I think as long as Google is the default version on many devices and ecosystems, people, whether they have a problem with this data or not, will continue to engage. I think people—myself included—we have our laziness gene in us. You know, we don't want to expend too much cognitive energy switching from the defaults, whether that's a new channel or a new way of searching.

Greg Sterling: Of course. Right, and there's data that backs that up. There was a really interesting study that paid people to use Bing versus Google and tried to measure whether that would continue after the end of the experiment. And for a small percentage of people it did, but most people went back to what they were comfortable and familiar with. So I want to break at this point because I think this is a lot of content for one episode, and we will bring you back for part two of this where we're going to talk about what the implications for local are, SEO in general and measurement, and how do marketers respond to all of this. There's a lot of important things in shifting your perspective and shifting your tactics. Then we'll do a little bit of prognostication about the near-term future. Thank you very much, Garrett Sussman, and we'll have you on immediately after we conclude this for part two. OK, thanks.